UPDATE, 10/17/2017: This post hasn’t aged well, and needs some patching. The title should be “TTR is more important than TBF (for most types of F)” Why? Because taking the statistical mean of TTR or TBF makes absolutely no sense, whatsoever. Incidents and events simply are not comparable in that way, and even if they were, the time an event starts is infinitely negotiable.

Present Allspaw tells past Allspaw: you were wrong, buddy.

This week I gave a talk at QCon SF about development and operations cooperation at Etsy and Flickr. It’s a refresh of talks I’ve given in the past, with more detail about how it’s going at Etsy. (It’s going excellently 🙂 )

There’s a bunch of topics in the presentation slides, all centered around roles, responsibilities, and intersection points of domain expertise commonly found in development and operations teams. One of the not-groundbreaking ideas that I’m finally getting down is something that should be evident for anyone practicing or interested in ‘continuous deployment’:

Being able to recover quickly from failure is more important than having failures less often.

This has what should be an obvious caveat: some types of failures shouldn’t ever happen, and not all failures/degradations/outages are the same. (like failures resulting in accidental data loss, for example)

Put another way:

MTTR is more important than MTBF

(for most types of F)

(Edited: I did say originally “MTTR > MTBF”)

What I’m definitely not saying is that failure should be an acceptable condition. I’m positing that since failure will happen, it’s just as important (or in some cases more important) to spend time and energy on your response to failure than trying to prevent it. I agree with Hammond, when he said:

If you think you can prevent failure, then you aren’t developing your ability to respond.

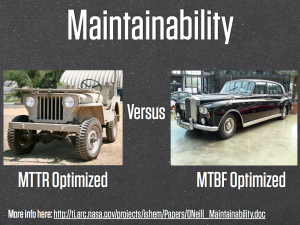

In a complete steal of Artur Bergman‘s material, an example in the slides of the talk is of the Jeep versus Rolls Royce:

Artur has a Jeep, and he’s right when he says that for the most part, Jeeps are built with optimizing Mean-Time-To-Repair, not the classical approach to automotive engineering, which is to optimize Mean-Time-Between-Failures. This is likely because Jeep owners have been beating the shit out of their vehicles for decades, and every now and again, they expect that abuse to break something. Jeep designers know this, which is why it’s so damn easy to repair. Nuts and bolts are easy to reach, tools are included when you buy the thing, and if you haven’t seen the video of Army personnel disassembling and reassembling a Jeep in under 4 minutes, you’re missing out.

Artur has a Jeep, and he’s right when he says that for the most part, Jeeps are built with optimizing Mean-Time-To-Repair, not the classical approach to automotive engineering, which is to optimize Mean-Time-Between-Failures. This is likely because Jeep owners have been beating the shit out of their vehicles for decades, and every now and again, they expect that abuse to break something. Jeep designers know this, which is why it’s so damn easy to repair. Nuts and bolts are easy to reach, tools are included when you buy the thing, and if you haven’t seen the video of Army personnel disassembling and reassembling a Jeep in under 4 minutes, you’re missing out.

The Rolls Royce, on the other hand, likely don’t have such adventurous owners, and when it does break down, it’s a fine and acceptable thing for the car to be out of service for a long and expensive fixing by the manufacturer.

We as web operations folks want our architectures to be built optimized for MTTR, not for MTBF. I think that the reasons should be obvious, and the fact that practices like:

- Dark launching

- Percentage-based production A/B rollouts

- Feature flags

are becoming commonplace should verify this approach as having legs.

The slides from QConSF are here:

Pingback: When the norm is to get along, being a jerk really stands out | Business is Personal

I like the idea of measuring systems architecture around MTTR:MTBF ratios.

I’ve worked in both 10:1 and 1:10 extremist shops and usually strive towards the center rather than either extreme. It has to be aligned with the culture and business though, especially when a dichotomy forms between ops/dev. My experience is that lower MTTR requires more OPEX, where higher MTBF is more CAPEX intensive. That’s the view of the business folk anyways, and generally pans out that way, especially if you do it right.

Great topic, nice to see folks writing about this. Some observations, if I may:

It is misleading to write MTTR > MTBF even with an explanation next to it. This means your meme won’t get off the ground because people will largely disagree with it, even if they agree with your premise behind it (that > means better, not greater). People know these mean times are measurements of time, and so > means “more time” not “more quality”. You know that, I know that, everyone knows that.

So that means Al is barking up the wrong tree, because a MTTR:MTBF ratio should ALWAYS be lower:higher. Besides which, are we talking about components or services here? I hope we’re talking about services, but I think you’re talking about components (that might be redundant and therefore we might be happy to have a long MTTR or one of infinity, ie. never repair it and just throw it away).

When it comes to components I’m with you on (1) expect failure, and (2) plan what’s best to do about it. It’s just that I think for (2) you can do (at least) three things:

1) Ignore it and let it die, dispose of the corpse later.

2) Rebuild it (fast)

3) Repair it (slow)

When it comes to services comprising of multiple components (including people-type components!) then MTBF is easy to monitor but MTTR is a whole new ball game…

Keep it up, love the chat on this.

Steve

Steve: indeed, I’m talking about the greater web service, not components. While MTBF has historically pointed to components, the context here is with failures that manifest in web site or API services degradations or outages.

The topic is ripe for many nuances. Given that all failures, degradations, and outages can have varying severities and values to any given business, the overall points are to be balanced with context. My value on those varying metrics today may absolutely be different than yours, and indeed might even be different for my own, tomorrow. There’s no dictating a one-true-way on those. 🙂

Well aware of the ‘greater than’ issue when I wrote it, I’ll just have to count myself terrible at meme spreading. For those reading any of the material here, I trust that they’ll understand that I’m probably not saying that the length of time to resolve an issue/outage is somehow greater than the mean time between those failures. But: it’s a fine point, I’ve fixed it to be verbose but clear.

Glad to see this getting some discussion. At Inktomi we ended up focusing on MTTR primarily because once MTBF was decent, it was much more productive to reduce MTTR, which has a nice short edit-compile-debug cycle.

I wrote about this a while back:

http://www.cs.berkeley.edu/~brewer/papers/GiantScale.pdf

However, MTTR is more important today in part due to the increased complexity of failures.

-Eric

Excellently? Get an adult to help you with writing next time.

Thanks for helping me winnow my RSS feeds.

Pingback: Jeep knows how you treat them | Business is Personal

It depends on your clients. Are you selling jeeps or rolls royces? Different people want different things. Enterprise customers buying SaaS tend to be much more interested in mbtf, while smaller concerns generally want features fast (which you don’t get with high mtbf emphasis) and are happy with occasional live bugs but low mttr.

Enterprise customers will take more than a year to even decide on a Saas, whereas smaller companies would be out of business if they took that long to decide.

When you’re getting something going (startup) you probably need to focus on mttr, because your early adopters are going to be clamoring for the features and forgiving about the (low-mttr) bugs. When/if you hit the big time and start serving really big companies, you will end up in long, acrimonious meetings about why X was allowed to go live if it wasn’t bulletproof yet. Even when the fix goes out way before the meeting convenes, the pain continues.

You don’t tell a millionaire that he just needs to unbolt the seat of his Rolls and replace a fuse and the radio will be working again. “Honestly, it’ll take fifteen minutes, tops!”. Good luck with that :).

None of this negates what you are saying, just the scope of where it applies.

I thought the post was pretty “excellently” myself. Sometimes you need to use the right word, “irregardless” of its status as a grammatically correct golden boy. Grouch.

Pingback: QCon: Enough with the theory, already—Let’s see the code - AppDynamics: The APM Blog

Pingback: links for 2010-11-12 « Dan Creswell’s Linkblog

Pingback: Rattle » Week #1290

Hello Kitchen Soap Blogging Team,

I ran across your blog and saw some excellent content that really illustrates this growing umbrella topic of DevOps. I’m a content curator for DZone.com and I’d actually like to invite you to join our MVB program (www.dzone.com/aboutmvb) if that description seems interesting to you.

We have over 300 MVBs at DZone currently, and most of them say that they definitely notice an increase in traffic because of our links back to original postings. They also say that their voice is much louder on DZone and has a chance to be heard by a much wider audience. We even have some ‘big name’ MVBs including Ted Neward, Martin Fowler, and Ben Forta. It’s pretty simple to join, you just fill out a one page consent form and that’s it! Send me an email and let me know what you think.

We’d love to have you as a leading voice in our upcoming DevOps topic page.

Feel free to contact me at my direct email address: katie (at) dzone (dot) com

Thanks!

-Katie McKinsey-

Pingback: Resilience Engineering: Part I

Pingback: Code the Infra

Pingback: James’ Reading 05-11 « a db thinker's home

Pingback: Canary Builds for Split Testing and Continuous Delivery | Johnny Cussen

Pingback: Fazendo Devops funcionar fora de Webops | iMasters

Pingback: Invisible power of nurturing environments: make it easy to do the right thing | Refactoring for EEEE

Pingback: DevOps não está matando os desenvolvedores, mas sim a produtividade deles -

Pingback: Fazendo Devops funcionar fora de Webops -

Pingback: QCon: Enough with the theory, already—Let’s see the code | Application Performance Monitoring Blog | AppDynamics

Pingback: To embolden – tariqjuneja

Pingback: Always Agile Consulting · The Value Of Optimising For Resilience

Pingback: The Value Of Optimising For Resilience – Continuous Delivery Consulting

Pingback: DevOps não está matando os desenvolvedores, mas sim a produtividade deles - DialHost