I thought it might be worth digging in a bit deeper on something that I mentioned in the Advanced Postmortem Fu talk I gave at last year’s Velocity conference.

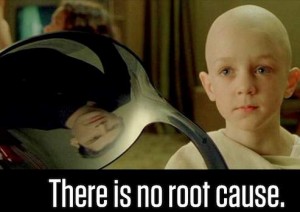

For complex socio-technical systems (web engineering and operations) there is a myth that deserves to be busted, and that is the assumption that for outages and accidents, there is a single unifying event that triggers a chain of events that led to an outage.

This is actually a fallacy, because for complex systems:

there is no root cause.

This isn’t entirely intuitive, because it goes against our nature as engineers. We like to simplify complex problems so we can work on them in a reductionist fashion. We want there to be a single root cause for an accident or an outage, because if we can identify that, we’ve identified the bug that we need to fix. Fix that bug, and we’ve prevented this issue from happening in the future, right?

There’s also strong tendency in causal analysis (especially in our field, IMHO) to find the single place where a human touched something, and point to that as “root” cause. Those dirty, stupid humans. That way, we can put the singular onus on “lack of training”, or the infamously terrible label “human error.” This, of course, isn’t a winning approach either.

But, you might ask, what about the “Five Whys” method of root cause analysis? Starting with the outcome and working backwards towards an originally triggering event along a linear chain feels intuitive, which is why it’s so popular. Plus, those Toyota guys know what they’re talking about. But it also falls prey to the same issue with regard to assumptions surrounding complex failures.

As this excellent post in Workplace Psychology rightly points out limitations with the Five Whys:

An assumption underlying 5 Whys is that each presenting symptom has only one sufficient cause. This is not always the case and a 5 Whys analysis may not reveal jointly sufficient causes that explain a symptom.

There are some other limitations of the Five Whys method outlined there, such as it not being an idempotent process, but the point I want to make here is that linear mental models of causality don’t capture what is needed to improve the safety of a system.

Generally speaking, linear chain-of-events approaches are akin to viewing the past as a line-up of dominoes, and reality with complex systems simply don’t work like that. Looking at an accident this way ignores surrounding circumstances in favor of a cherry-picked list of events, it validates hindsight and outcome bias, and focuses too much on components and not enough on the interconnectedness of components.

During stressful times (like outages) people involved with response, troubleshooting, and recovery also often mis-remember the events as they happened, sometimes unconsciously neglecting critical facts and timings of observations, assumptions, etc. This can obviously affect the results of using a linear accident investigation model like the Five Whys.

However, this identifying a singular root cause and a linear chain that stems from it makes things very easy to explain, understand, and document. This can help us feel confident that we’re going to prevent future occurrences of the issue, because there’s just one thing to fix: the root cause.

Even the eminent cognitive engineering expert James Reason’s epidemiological (the “Swiss Cheese”) model exhibits some of these limitations. While it does help capture multiple contributing causes, the mechanism is still linear, which can encourage people to think that if they only were to remove one of the causes (or fix a ‘barrier’ to a cause in the chain) then they’ll be protected in the future.

I will, however, point out that having an open and mature process of investigating causality, using any model, is a good thing for an organization, and the Five Whys can help kick-off the critical thinking needed. So I’m not specifically knocking the Five Whys as a practice with no value, just that it’s limited in its ability to identify items that can help bring resilience to a system.

Again, this tendency to look for a single root cause for fundamentally surprising (and usually negative) events like outages is ubiquitous, and hard to shake. When we’re stressed for technical, cultural, or even organizationally political reasons, we can feel pressure to get to resolution on an outage quickly. And when there’s pressure to understand and resolve a (perceived) negative event quickly, we reach for oversimplification. Some typical reasons for this are:

- Management wants an answer to why it happened quickly, and they might even look for a reason to punish someone for it. When there’s a single root cause, it’s straightforward to pin it on “the guy who wasn’t paying attention” or “is incompetent”

- The engineers involved with designing/building/operating/maintaining the infrastructure touching the outage are uncomfortable with the topic of failure or mistakes, so the reaction is to get the investigation over with. This encourages oversimplification of the causes and remediation.

- The failure is just too damn complex to keep in one’s head. Hindsight bias encourages counter-factual thinking (“..if only we payed attention, we could have seen this coming!” or “…we should have known better!”) which pushes us into thinking the cause is simple.

So if there’s no singular root cause, what is there?

I agree with Richard Cook’s assertion that failures in complex systems require multiple contributing causes, each necessary but only jointly sufficient.

Hollnagel, Woods, Dekker and Cook point out in this introduction to Resilience Engineering:

Accidents emerge from a confluence of conditions and occurrences that are usually associated with the pursuit of success, but in this combination–each necessary but only jointly sufficient–able to trigger failure instead.

Frankly, I think that this tendency to look for singular root causes also comes from how deeply entrenched modern science and engineering is with the tenets of reductionism. So I blame Newton and Descartes. But that’s for another post. 🙂

Because complex systems have emergent behaviors, not resultant ones, it can be put another way:

Finding the root cause of a failure is like finding a root cause of a success.

So what does that leave us with? If there’s no single root cause, how should we approach investigating outages, degradations, and failures in a way that can help us prevent, detect, and respond to such issues in the future?

The answer is not straightforward. In order to truly learn from outages and failures, systemic approaches are needed, and there are a couple of them mentioned below. Regardless of the implementation, most systemic models recognize these things:

- …that complex systems involve not only technology but organizational (social, cultural) influences, and those deserve equal (if not more) attention in investigation

- …that fundamentally surprising results come from behaviors that are emergent. This means they can and do come from components interacting in ways that cannot be predicted.

- …that nonlinear behaviors should be expected. A small perturbation can result in catastrophically large and cascading failures.

- …human performance and variability are not intrinsically coupled with causes. Terms like “situational awareness” and “crew resource management” are blunt concepts that can mask the reasons why it made sense for someone to act in a way that they did with regards to a contributing cause of a failure.

- …diversity of components and complexity in a system can augment the resilience of a system, not simply bring about vulnerabilities.

For the real nerdy details, Zahid H. Qureshi’s A Review of Accident Modelling Approaches for Complex Socio-Technical Systems covers the basics of the current thinking on systemic accident models: Hollnagel’s FRAM, Leveson’s STAMP, and Rassmussen’s framework are all worth reading about.

Also appropriate for further geeking out on failure and learning:

Hollnagel’s talk, On How (Not) To Learn From Accidents

Dekker’s wonderful Field Guide To Understanding Human Error

So the next time you read or hear a report with a singular root cause, alarms should go off in your head. In the same way that you shouldn’t ever have root cause “human error”, if you only have a single root cause, you haven’t dug deep enough. 🙂

Thanks a lot. Very interesting!!! Now I will think about it the whole week-end…. (BTW, pdf links are broken)

I tend to read the industrial, aviation/transportation, maritime postmortems which are usually more public than the datacenter failures. The classic write-up is Three Mile Island, though the Air France crash was particularly chilling and a cascading failure. Almost all (all?) aviation accidents are cascades so your point is very valid though instead of saying “there is no root cause”, I would say, “the cause is likely this cascading sequence of failures”…. For example.

http://www.popularmechanics.com/print-this/what-really-happened-aboard-air-france-447-6611877?page=all

Basically

1) Captain takes break, leaves #3 pilot in charge, not #2

2) Radar set incorrectly

3) Weird weather throws in confusing temp changes and smells into cockpit

4) Speed sensor freezes up

5) Autopilot by design disconnects

6) Avoiding weather, climbs w/o adding thrust

7) Stall

8) Both pilots hold the nose up instead diving to gain lift

9) 228 people loose their lives

On a less academic level, I find myself addicted to NatGeo shows like “Seconds to Disaster” and any other kind of engineering disaster documentary. In every single case, these disasters, whether a dam collapsing, a ferry sinking, or a chemical plant accidentally gassing thousands, the causes are multiple, interactive, and often multiplicative.

I’m really enjoying this series of blog posts, by the way. I, too, work on complex systems, and I love systems thinking. Too many people focus in on a single component of a system and forget the ecology. Thanks!

the discussion of systems thinking in peter senge’s book “the fifth discipline” surveys some of the consequences (technical and otherwise) that commonly befall organizations who can’t internalize the lesson(s) in this post. behind the initial aphorisms and catchphrases, he does an excellent job analyzing various archetypal cases involving feedback loops and leverage. he provides some thought-provoking practical approaches to improving one’s ability to get past the single-cause, pure-linear mindset, and does better than “you gotta look at the big picture”. a great post, thank you.

@Lee Thompson Step #8 is the root cause. Barring mechanical failures, stalls are 100% recoverable with sufficient altitude, provided the pilot dives instead of climbs.

Lee: agreed that there’s a wealth of excellent stuff found in public postmortems, including those from NASA. And you’re certainly right that the Air France 447 case is chock full of all sorts of good info.

And agreed that cascades help illuminate multiple contributing causes. Just as long as the investigation includes sufficient surrounding information on each of the pieces, and recognizes the dynamic interconnectedness of them. 🙂

Patrick: there isn’t a singular root cause of AF-447. They wouldn’t have been in the situation they were in, for many reasons. There was bad weather. The sensors froze. There was unclear reasoning with respect to pilot behavior. There was confusion from instrument readings. Lots of things in combination, essentially.

They climbed instead of dove, for a reason. What that reason was, or why it made sense to them (otherwise they wouldn’t have done it) is key to working out how similar accidents can be prevented.

The Safety Review Panel for Air France included both Hollnagel and Woods, and their findings are here, outlining numerous organizational workings at the blunt end that undoubtedly contributed to the environment that surrounded pilot performance, attitudes towards safety, and allowing pilots to voice concerns to the business.

Included are these gems:

“We were repeatedly told by pilots and others that line managers are not empowered to hold pilots accountable for improper actions.”

“The General Operations Manual allows Captains to deviate from Air France procedures, if necessary, for the safety of the aircraft and passengers. Similar authority is universally accepted internationally and is phrased “if a greater emergency exists”. Unfortunately there is a small percentage of Captains who abuse this general guidance and routinely ignore some rules.”

“Unfortunately FDM (Flight Data Monitoring) is seen by some pilots as a “policeman” and not as a proactive tool to improve procedures and safety.”

Again: multiple contributing causes, each only jointly sufficient. 🙂

There’s an entire field that studies just this – Human Performance Improvement (aka Human Performance Technology, HPT), with many models that focus on the systemic causes of existing failures, as well as considering the system dynamics when evaluating possible interventions.

If interested in more information, ISPI is a decent place to start – http://www.ispi.org/. Or you can study Gilbert’s Behavioral Engineering Model, Mager and Pipe’s Performance Analysis Flowchart, Dessinger and Moseley’s Full Scope Evaluation, Rummler and Brache’s Performance Matrix, and the International Society for Performance Improvement’s Ten Standards of Performance

Pingback: DYSPEPSIA GENERATION » Blog Archive » “There Is No Root Cause”

John,

Excellent post. Over 10 years ago (pre-NoSql) I worked with this company that wanted to create an IT/Operations data warehouse solution that ran their ETL2 processing on the 15 minute and 60 minute timeline, as opposed to the traditional 24 hour process. When I first talked to them I though they were crazy. However, after further discussion they explained that they felt it was impossible to capture incidents at real time (i.e., the single pain of glass mentality). They wanted to create an operations team that were data analysts who watched the four walls of their organizations operations at the aggregate levels of 15 and 60 minute intervals. They eventually were acquired and the acquiring company canned all their brilliant work. However, it was the first time I was forced to think about complex operations. The next time I was forced to think out of the box was when I saw a presentation around 5 years ago where an Atlanta division of AT&T were showing how they have punted on traditional event correlation and they were using Netuiitive instead (predictive analysis). After the speaker and I wrestled for around 30 minutes the light bulb went off. I realized traditional event correlation was dead and from that day I started to become a huge fan of CEP. The next time I was blown away was when I heard this presentation at OSCON ( http://www.oscon.com/oscon2010/public/schedule/detail/14865 ) and then a further post mordem in Damon and my DevopsCafe podcast ( http://devopscafe.org/show/2010/6/28/episode-4.html )

I could go on for hours but as you can tell I am not that good of a writer. Needless to say, your post excellently points out that in IT we all better start leaning how to punt on traditional thinking. IMHO, thinking that human brains will be able to single-handily keep up with these complex systems is simply arrogant. We need to accept defeat and starting using the same kind of weapons the complex systems are using in order to understand them.

Hope to see you at Velocity this year (Hint Hint).

John M Willis

VP of CSE at Enstratus

Pingback: Last weeks top links (week 6, 2012) | Zipfelmaus

Pingback: Stuff I’m reading (weekly) | Jon Stahl's Journal

It looks to me like “imminent” doesn’t mean what you think it means. You probably intended “eminent”.

James: Gah, thanks for that! Fixed.

Something could be learned from NASA & FAA – you really need to make sure your software has a “black box” of sorts so that you can at least see what was happening at the time of the fault..

@Lee Thompson — The problem with just looking at it as a set of cascading failures is that it ignores the “errors of omission” which coud have arrested the cascade at multiple points along the way. So, yes, the proximate cause is a sequence…but the reason the cascade could/did happen is a bunch of other failures which did not occur sequentially. Which gets to the “root cause” question.

From your list:

1) Captain takes break, leaves #3 pilot in charge, not #2 |||| Was this consistent with best practice? Was there policy in place which could prevent this if not?

2) Radar set incorrectly |||| How? Were there design failures which let that happen? Policy failures? Training failures?

3) Weird weather throws in confusing temp changes and smells into cockpit |||| Design failure on the plane?

4) Speed sensor freezes up |||| Design failure of sensor? Could there be more instrumetation to detect this fault (if unavoidable)?

5) Autopilot by design disconnects |||| Could there have been more information presented to pilots when this happened to better educate them on what was taking place?

6) Avoiding weather, climbs w/o adding thrust |||| Lack of proper warnings? Training? Insufficient good information to make decisions on?

That’s the sort of analysis which says it’s not a sequence of failures, it’s a host of failures of different magnitude which, given a specific sequence of events, allow catastrophic failure.

Ishikawa Diagrams are also useful tools in trying to find all contributing causes to a failure.

Excellent blog. Something I’ve been trying to get in a number of heads over the last years dealing with web performance issues. Since you mentioned Descartes I would like to push a bit for a collection of data center examples around this – since seeing is believing 🙂

In most of my discussions the pushback I got was : “yeah, but if we had done this, then it wouldn’t have happened-so that is the root cause. ” Explaining that there being one action to prevent disaster is not the same as there being one cause for the disaster is not so easy-so having a collection of examples in the bag helps.

Pingback: Management Improvement Blog Carnival #158 » Curious Cat Management Improvement Blog

Pingback: Root Cause Analysis – Having a Deeper Understanding – Empowered High Schools

Elliott Jaques, in his book Requisite Organization, details his far-reaching finding with respect to the nature of human capability:

1. “There is a hierarchy of four ways, and four ways only, in which individuals process information when engrossed in work: declarative, cumulative, serial and parallel.

2. This quartet of processes recurs within higher and higher orders of complexity of information.

3. Each of these processes corresponds to a distinct step in potential capability of individuals.

4. Our study showed a .97 correlation between the universal underlying managerial layering of the managerial hierarchy and each discrete step in complexity of mental process, and thus potential capability.â€

The major conclusion from this finding is that: “The existence of the managerial hierarchy is a reflection in organizational life of discontinuous steps in the nature of human capability.â€

Individuals will process problems only to the extent of their capability. Different types and levels of problems require different problem solving capabilities. The practices outlined by Elliott Jaques help CEOs and organizations acknowledge these “discontinuous steps†of human capability and place employees in the right roles.

I agree. Root cause analysis for me has never been about finding a single cause. My training in root cause analysis came from studying the Theory of Contstraints by Eliyahu Goldratt, published in 1999 and developed earlier than that. He describes a problem mapping technique that rigourously examines undesirable cause-effect relationships in their full complexity, including showing feedback.

H. William (Bill) Dettmer did a great job expanding on this in his book The Logical Thinking Process, published in 2007. I recommend this to anyone interested in this topic.

So, when these gentlemen talk about root cause they usually mean root causes (plural) and even that is fuzzy. The deeper you go, the closer you get to policy and structural considerations that reach the boundaries of your control and influence. If you focus on superficial problems, well inside your control, then solving them is easy pickings. But you get repeat failures because the root causes are untouched. If you pursue the deeper causes, you spend more energy, because you must use persuasion and education to convince parties outside your control to change.

Pingback: Three Drunken SysAds » (n) Steps to Configuration Management Paradise… | Three Drunken SysAds

Pingback: A human approach to postmortem reviews - Programming

Pingback: Revisiting What is DevOps - O'Reilly Radar

This is a nice summary of the past decade or two of research into accident/incident causation, complex systems, etc. My first exposure to this area of study was in 1994 or so, when I first started working in the nuclear power industry (right out of university). Even at that time, the training I received on root cause analysis suggested that the “single root cause” theory was on the way out, that it would not be unusual to find 2 to 3 root causes for any given problem, and that I also needed to look for contributing causes… things that might be less fundamental, less important, or perhaps just catalysts or risk-increasing factors. So, of course, the first big root cause investigation that I did (for which the vendor said “we don’t do software root causes”) found just one root cause… but at least it wasn’t the one they were expecting (human error in software modification). Imagine their surprise when I told them that their software testing process was so focused on ensuring no unintended changes occurred that it kind of ignored ensuring that intended changes actually operated correctly… and then proceeded to show them that the exact same issue had bitten them on the ass a few times within the preceding couple of years. Anyway, I digress. I could go on and on about this stuff, but in closing, let me just say that you might enjoy reading some of the stuff on my blog. Much of it is right in line with what you’ve talked about in this post. One thing in particular that you might like is my take on the 5 Whys – http://www.bill-wilson.net/b73

Regards!

Bill Wilson

Pingback: The infinite hows - O'Reilly Radar

Pingback: The Infinite Hows (or, the Dangers Of The Five Whys) | Kitchen Soap

Pingback: Required Reading – 2015-04-01 | We Build Devops

Pingback: Practical Postmortems at Etsy | On Skylights

Pingback: 27-12-2015 - This is the end of this year... so these are the leftover links - Magnus Udbjørg

Pingback: 25-01-2016 - As you can see, my young apprentice, your friends have failed. Now witness the firepower of this fully ARMED and OPERATIONAL link package! - Magnus Udbjørg

Pingback: Last weeks top links (week 6, 2012) – tinkr.de

Pingback: SRE Weekly Issue #33 – SRE WEEKLY

Pingback: How to Detox the IT Industry – IT MARKETPLACE

Nice and full of useful information. Even the comments are informative. I have also written few blog posts on Root Cause Analysis. Here is the link. https://gurnoorinders-blog.blogspot.in/2016/10/analysis-of-root-cause-analysis_3.html

Pingback: SE-Radio Episode 282: Donny Nadolny on Debugging Distributed Systems : Software Engineering Radio

Pingback: SE-Radio Episode 284: John Allspaw on System Failures: Preventing, Responding, and Learning From : Software Engineering Radio

If “Five Whys” is the wrong way, what’s the right way? I find myself nodding along but the advice here is abstract (describing issues) and negative (avoid certain things). In a real world situation I wouldn’t know how to apply the advice, or what problems I’m even trying to avoid.

I’d love it if someone has examples of how the linear cause analysis went wrong and how to do it better. Maybe even a made-up example with two alternate histories.

Lastly – is this advice even applicable to most organizations? In the organizations I’ve recently worked for, we’ve had issues like:

– Literally no one is even watching a metric

– The company depends on heroes doing undocumented one-off tweaks to get through crises

– The CEO loves application work, doesn’t appreciate operations

– The VP Eng is highly political and needs to deliver the news that the problem is solved, in a way that saves face and the board appreciates. A procedure to follow and a promise of never failing again is where they want to go.

Maybe at places like Etsy you had a more enlightened culture, and eliminated the obvious kinds of failure, but the problems I’m dealing with are usually cruder.

I guess what I’m implicitly asking above is – does these insights apply even to companies that are just forming there resilience culture, or do you have to be past the basics?

Neil: thanks for the comments and questions on this.

First, these *are* the basics. This is what you start with. The alternative to the 5 why’s is asking different and better questions about accidents. I’ve written about simply asking “how†is a good step in the right direction, because “why†asks for explanations, not descriptions: https://www.oreilly.com/ideas/the-infinite-hows

There are multiple references in both this and that piece I link to that can fuel directions to take in different organizations and contexts, but the most direct route to influence how incidents are explored and understood is to begin with questions you ask. If they’re powerful enough, the diverse answers they gather will at least paint how shallow linear causality approaches are.

As to convincing management of taking these approaches, here are suggestions:

1 – Appeal to authority! Normally used as a logical fallacious tactic, feel free to point to professional practitioner-researchers’ work that support these approaches. If the Agile Manifesto was good enough for them, then pillars of Systems Safety, Human Factors, and Resilience Engineering should as well. Sidney Dekker’s “Field Guide to Understanding ‘Human Error’†is the canonical intro to these topics, and “Behind Human Error†(Woods, et. al.) is the mid-level text.

2 – This is agnostic to operations/app development divides. How people anticipate (design) code is just as relevant to this topic as how failures with code are reacted to.

3 – Start distributing the Debriefing Facilitation Guide (https://extfiles.etsy.com/DebriefingFacilitationGuide.pdf) – it was written for this purpose if folks are sold by “template-driven†postmortem approaches, this can’t possibly make it any worse.

Wonderful website. A lot of helpful information here. I’m sending

it to a few buddies ans additionally sharing in delicious.

And obviously, thank you on your sweat! csnbet.com

Pingback: Why incidents can’t be monocausal – Lorin Hochstein

Pingback: The Gamma Knife model of incidents – Lorin Hochstein

Pingback: SRE Weekly Issue #193 – SRE WEEKLY

A recently paper about AirFrance’s 447 flight in a situated, in context and understanding of the pilots’ actions approach

https://www.scielo.br/pdf/gp/v25n3/en_0104-530X-gp-0104-530X1115-17.pdf