This is a ramble continued from before, which means it’s mostly a blog post for me, but maybe others might find it interesting.

The last time I made an analogy between back-end web architectures and mechanical structures, I blathered on about what are basically structural limitations of individual components in a physical device, and how it’s somewhat analogous to the mechanics of a website’s infrastructure. For example, just like the tie rods, bumpers, and frame on your car, webservers show some amount of “strain” (i.e. resource usage, like CPU) when they are loaded up with “stress” (i.e., requests.) Mechanical components have limited ability to withstand pressure, as do web tier components. It’s exactly those load characteristics and ultimate limitations that drive capacity planning.

Spring and Damper, Loaded Under Stress

But anyone who works with web architectures knows that individual components are only a part of the whole story of how much “load” a particular system can take, before it degrades (gracefully or not) or fails (gracefully or not.)

In a car, bolts connect struts and shocks together, which can deform non-linearly, car bumpers press on frames which can squeeze firewalls closer to engine blocks, and a myriad of other inter-connected influences happen. The car will drive, crash, and even sit idle all according to those stress-strain relationships and can be characterized by springs, dampers, and the material properties that the components are made up of.

Check out this finite-element simulation of a pickup truck crashing into a rigid wall:

Pickup Truck Crash Simulation

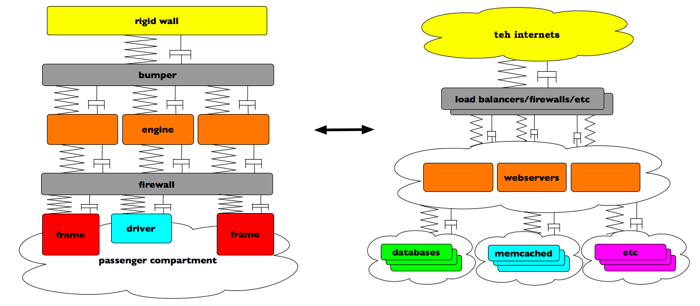

In the same way, web architectures can have storage layers, application layers, and all sorts of resources, each with their own “stress/strain” characteristics, and limits. Caching layers have limits that don’t just touch response time; they affect the origin servers or databases that they are caching for. Buffers all around can fill and empty. Network bandwidth expands and contracts, hopefully within its limits. And in most typical setups, webservers can push and pull on almost everything. The list goes on and on, up and down the stack.

My brain filled with this analogy sorta looks like this:

Thinking about web architectures like this helps me visualize the whole system, and give context to the dynamics of the whole thing.

Now, at least one part of this analogy doesn’t play out very well. While mechanical systems can experience nonlinear and very dynamic change (in the case of a car crash, for example) the properties of their components aren’t easily changed in the same way that web architectural bits can.

With websites, the introduction of change (for example, a bad database query) can affect (in a bad way) the entire system, not just the component(s) that saw the change. Adding handfuls of milliseconds to a query that’s made often, and you’re now holding page requests up longer. The same thing applies to optimizations as well. Break that shitty query into two small fast ones, and watch how usage can change all over the system pretty quickly. Databases respond a bit faster, pages get built quicker, which means users click on more links, etc. This second-order effect of optimization is probably pretty familiar to those of us running sites of decent scale.

Back to our mechanical analogy. Imagine if you could magically change the tensile properties of the steel in your car’s engine block. Small stresses or strains within the engine then might not add up in the same way, and that will affect the heat it generates, putting more resistance to your cooling system. Or your pistons might not deform those few microns that they used to at 4500 RPM. And so on and so on. Introducing small changes can balloon into large ones pretty quickly. Insert something corny here about holistic and systemic interconnectedness, Butterfly effects, etc.

Now consider a pit crew that could, remotely and instantly, change the rubber’s density of the tires on a race car. While it’s racing. Even better: imagine if the car could detect the conditions on the road, conditions within the car, conditions with the driver, and could adjust the material properties of the tires, the fuel mixture, or the stiffness of each part of the suspension, all automatically. While it’s racing. That would be insanely cool, to say the least.

Of course, on the web side of this analogy, these changes happen all the time to individual components. Since we do make a decent amount of changes to flickr.com on a daily basis (see bottom of this page), we can sometimes see some dramatic results of those changes in the same way. Squeeze some more speed with a recompile or upgrade, extend the cache expiry time of an object, or tighten up a slowish database query, and all of a sudden your whole system’s performance can look very different. So with web architectures, you can actually change the components while you’re racing.

This game of ‘follow-the-bottleneck’ is in many ways inevitable and unavoidable, but that’s ok. It’s yet another motivator for capacity planning and future development. One of our constantly moving goals is to automate, in whatever way we can, the absorption of each component’s pushes and pulls on the entire system.

In the web operations world, there’s a term for being able to make those instantaneous changes while you’re racing: automated infrastructure.

But that’s another topic entirely. 🙂

That’s a neat analogy! One of the most “ahh ha” moments I remember from college is that mechanical systems and electronic systems can be modeled in the same manner, i.e. resistor == dashpot, etc.

I hadn’t thought about it on a larger scale, so that will be neat. I’m starting to wonder if the equations could be similar as well and possibly used for benchmarking / capacity planning.

Unfortunately I don’t remember my Laplace Transforms well enough!

I drew a comparison to axle load in your prior blog, but I like your race car analogy even better!

it’s much faster 🙂 …and it better defines automated infrastructure

They’re not there yet, but the Indy and F1 computer hookup *does* give the pit crew the “heads up” [while the car’s on the track] for whatever wing/tire/fuel changes are needed on it’s next visit.

Pingback: Mechanical Analogies To Web Stuff, Part 1.